Sum-Product for LDPC Decoding

LDPC Decoding as Canonical Sum-Product

Low-density parity-check codes are the test case for loopy BP. Their Tanner graphs are bipartite, locally tree-like for large block lengths, and enforced to have girth by code design. These are exactly the conditions under which loopy BP shines.

The point of this section is to instantiate the general sum-product rules on the specific factor graph of an LDPC code, derive the log-domain message updates, and understand why the algorithm achieves capacity-approaching performance. We will also introduce density evolution — the asymptotic tool that predicts the decoding threshold without simulation.

Definition: LDPC Factor Graph

LDPC Factor Graph

An LDPC code has a parity-check matrix . Its Tanner graph has:

- Variable nodes , one per code bit.

- Check nodes , one per parity check.

- Edge iff . The posterior given channel observations is

The channel likelihood factors are unary (attached to each variable); the parity-check factors are -ary (degree equals check degree). LDPC-BP alternates updates between variable nodes (combining channel evidence with check messages) and check nodes (enforcing parity).

Theorem: Log-Domain Check-Node Update

Let be the incoming LLR on edge . The outgoing LLR on edge is Equivalently, in sign-magnitude form: where .

The check-node update combines LLRs into one output. The sign of the output is the XOR of the incoming signs (because the check enforces ); the magnitude is dominated by the weakest incoming LLR. The nonlinearity performs a soft-min operation, which is why the min-sum approximation works well.

Parity-check factor

. Equivalently, — so the XOR of the bits is zero.

Apply sum-product

.

Fourier transform trick

On , the Fourier transform is . Parity constraint becomes (convolution in real domain = multiplication in Fourier domain).

Express in LLRs

For binary , . Writing where : . Taking gives the stated formula.

Theorem: Log-Domain Variable-Node Update

Let be the channel LLR at bit . The outgoing LLR on edge is The final posterior LLR after iterations is

Variable nodes simply add incoming LLRs — this is the log-domain analog of multiplying probabilities for independent evidence. The extrinsic rule ("exclude the target check") prevents double-counting.

Apply variable-to-factor message rule

. Take log-ratio, using that .

Log-ratios add

Since the messages factorize over independent evidence, their log-ratios add: .

LDPC Sum-Product Decoder (Log-Domain)

Complexity: Per iteration: where is the number of edges in the Tanner graph. For regular codes: . Memory: one LLR per edge.The min-sum approximation replaces by , dropping the nonlinearity at a small performance cost (typically 0.3-0.5 dB). Min-sum is the standard in hardware decoders.

LDPC BP Decoding Trajectory: BER vs. Iterations

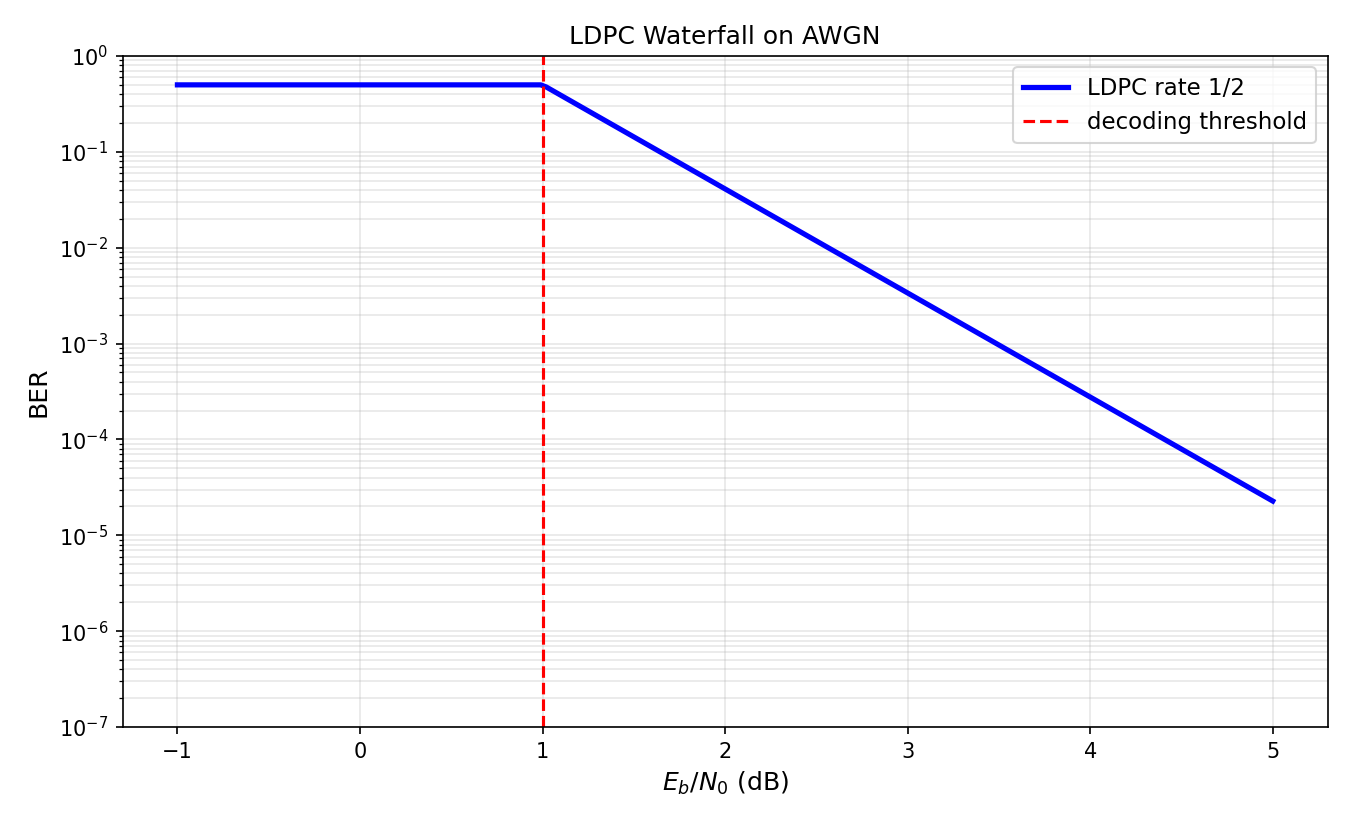

Run sum-product decoding on a random regular LDPC code over a BSC or AWGN channel. Track the bit-error rate of the current estimate across iterations. Watch the waterfall below the threshold.

Parameters

Definition: Density Evolution

Density Evolution

Density evolution tracks the distribution of BP messages across iterations in the large-, locally-tree-like limit. Let be the density of outgoing variable-to-check LLRs at iteration , and the density of check-to-variable LLRs. For a irregular ensemble: where and are specific functional transforms determined by the check-node and variable-node updates, and are the degree distributions.

Density evolution is a scalar (or functional) recursion that predicts the asymptotic BP bit-error rate as a function of channel quality. The threshold is the largest channel parameter for which (perfect recovery in the limit).

Theorem: BP Threshold (BEC)

For the Binary Erasure Channel (BEC) with erasure probability and a irregular LDPC ensemble, the density evolution recursion on the erasure probability is The BP threshold is the supremum of for which from .

On the BEC, messages are either "known" or "erased", so the scalar recursion tracks just one number: the probability that a random variable-to-check message is erased. The threshold is the channel quality at which the recursion first fails to drive erasure to zero — exactly the cliff where decoding breaks down.

BEC structure

Each message is either fully informative (known bit) or uninformative (erased). A variable-to-check message is erased iff its channel observation is erased and all other check-to-variable messages are erased.

Check-to-variable erasure probability

A check-to-variable message is erased iff any other variable-to-check input to that check is erased: for regular . Irregular version: .

Variable-to-check update

Erased iff channel erased AND all other checks erased: .

Threshold as fixed-point boundary

The map is monotone increasing. is where becomes unstable — the cliff. For , a nonzero fixed point survives: decoding fails asymptotically.

Example: Threshold of the (3,6)-Regular LDPC Code on the BEC

Compute the BP threshold of the regular LDPC ensemble on the BEC. Compare to Shannon's channel-coding bound.

Specialize density evolution

Regular code: , . Recursion: .

Find the threshold

Threshold condition: the map has only fixed point . For just above , a second fixed point appears. Numerical root-finding yields .

Compare to Shannon

Rate of a -regular code is . Shannon capacity of BEC is ; capacity-achieving requires . The gap is the cost of regularity. Irregular codes close this gap further.

LDPC Waterfall on AWGN

Irregular LDPC Codes Close the Gap

Irregular LDPC codes — with mixed variable-node degrees — can achieve thresholds arbitrarily close to Shannon capacity. The degree distribution is optimized by linear programming on the density evolution recursion (Richardson-Urbanke 2001). Modern 5G LDPC codes use carefully designed irregular ensembles with multi-edge types to optimize both threshold and error floor behavior.

Common Mistake: Uncalibrated Min-Sum Overestimates Confidence

Mistake:

Replacing the exact check-node -operation by plain min-sum without applying a scaling factor.

Correction:

Min-sum systematically overestimates the magnitude of the output LLR. Scale the output by (determined empirically) to compensate. This is called normalized min-sum and is the standard in hardware decoders. Without scaling, min-sum BP diverges or converges to overconfident errors in the waterfall region.

Historical Note: Gallager, Rediscovery, and 5G

1963-2020Robert Gallager invented LDPC codes and their iterative decoding in his 1963 PhD thesis — but computational limits of the era made the codes impractical. They were essentially forgotten for 30 years. David MacKay rediscovered them in 1995, showing that Gallager's iterative decoder achieves near-capacity performance at block lengths that modern hardware could handle. Richardson and Urbanke (2001) systematized density evolution, launching the era of code design by threshold optimization. Today LDPC is the channel code in 5G New Radio, in Wi-Fi (802.11n/ac/ax), in DVB-S2, and in NAND flash — a forty-year loop from theory to ubiquitous deployment.

5G NR LDPC Decoder Specifications

5G NR uses two LDPC base graphs (BG1 and BG2) with lifting factors to support block lengths from 40 to 8448 bits and rates from to . Commercial decoders use normalized min-sum with , layered scheduling, and 15-30 iterations. Throughput exceeds 10 Gb/s in modern 5G modems.

EXIT Charts for Iterative Decoder Design

The CommIT group contributed to developing EXIT (extrinsic information transfer) charts — a graphical dual of density evolution that tracks mutual information rather than message densities. EXIT charts enable joint design of LDPC codes with modulation, equalization, and MIMO detection in iterative receivers. They remain the workhorse tool for designing concatenated iterative systems.

Quick Check

A check node of degree receives incoming LLRs with magnitudes . In normalized min-sum BP with , what is the output magnitude (to a fifth incoming edge)?

Min-sum takes the minimum magnitude (2), times the scaling factor 0.8.

LDPC BP Decoding: Message Flow on a Tanner Graph

Key Takeaway

LDPC decoding is canonical loopy BP. The check-node update combines LLRs through a soft-XOR ( or min-sum); the variable-node update adds extrinsic LLRs. Density evolution predicts the exact decoding threshold, and irregular codes push it within hundredths of a dB of Shannon capacity. This is the theoretical backbone of modern channel coding.