Phase Transitions in Sparse Recovery

Sharp Thresholds in Sparse Recovery

In Chapter 14 we proved that the LASSO recovers a sparse signal from provided the sensing matrix satisfies the restricted isometry property (RIP) and the number of measurements exceeds .

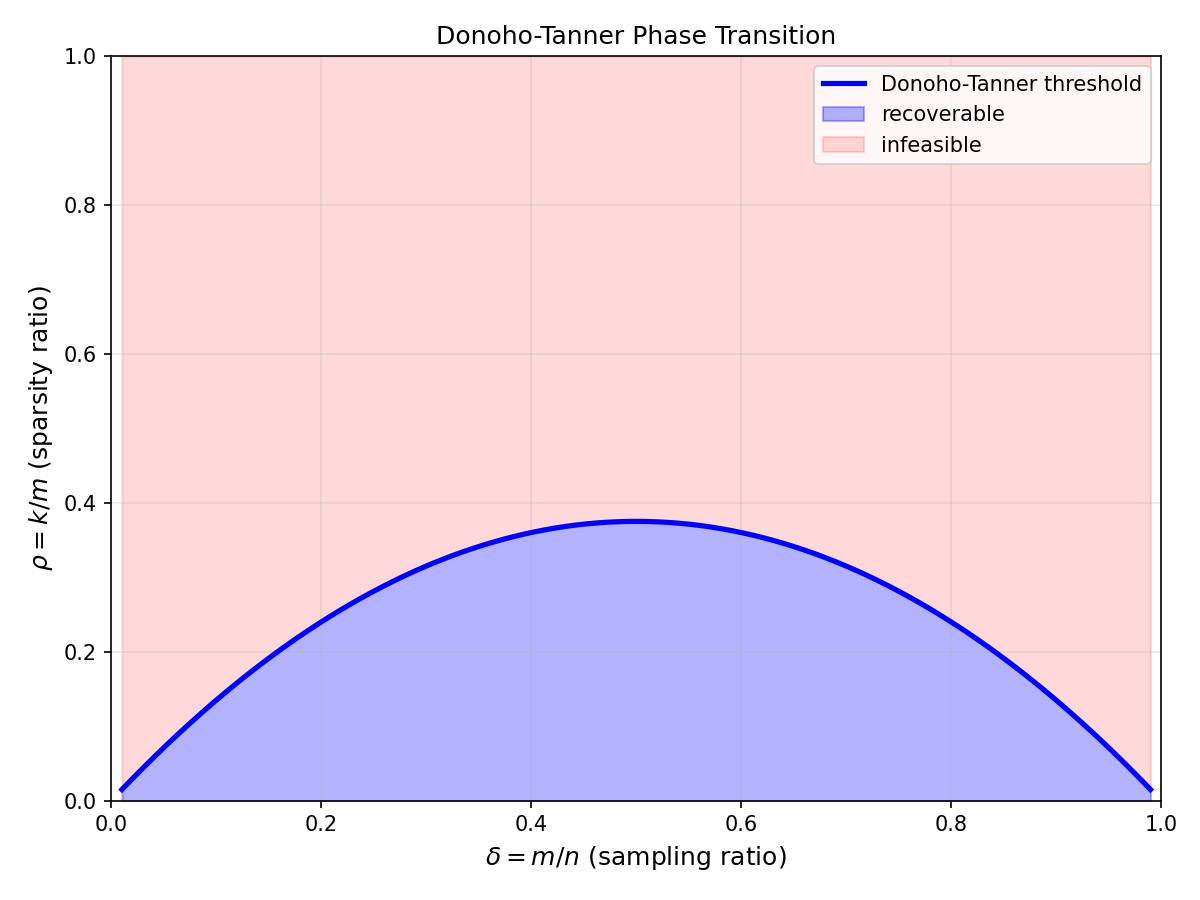

That bound is sufficient, and it captures the correct scaling. But for a system designer, the multiplicative constants are what matter: does one need or ? The point of this section is that for Gaussian , the gap between success and failure is not gradual — it is a phase transition with a sharp threshold that can be computed exactly. The Donoho-Tanner curve tells the designer whether their triple lies on the good side or the bad side of the cliff.

Definition: Phase Transition Regime

Phase Transition Regime

Consider the noiseless sparse recovery problem where has i.i.d. entries and is -sparse. Define the undersampling ratio and the sparsity ratio . The phase transition regime is the double asymptotic with fixed.

measures how aggressively we undersample relative to the ambient dimension. measures how many of the measurements are "spent" per nonzero entry. The regime with bounded is the high-compression limit.

Theorem: Donoho-Tanner Phase Transition

For with i.i.d. Gaussian entries, there exists a deterministic curve such that in the double limit : The curve is characterized implicitly by the solution of a geometric covering problem involving random convex polytopes (Donoho, 2005). Specifically, is the fraction of -faces of the cross-polytope that survive random projection to .

The transition is sharp: for any , the probability of recovery jumps from below to above as crosses in a band of width . Below the curve, minimization recovers exactly with probability one in the limit; above it, recovery fails. The curve captures the best possible tradeoff between measurements and sparsity for any convex-relaxation recovery method over Gaussian matrices.

Geometric formulation (combinatorial geometry)

-minimization succeeds at iff lies on a face of (the ball) whose image under is also a face of . The number of such "surviving" faces is a classical random-polytope quantity.

Affinely invariant face-counting

By the Affentranger-Schneider face-counting formulas for random polytopes, the expected fraction of -faces surviving is expressible in terms of external angles. These angles are computed via a scalar integral.

Sharpness via concentration

The number of violated conditions concentrates around its mean with variance , so fluctuations vanish on the scale. The sharp threshold follows.

Weak and strong thresholds

The theorem above concerns the weak threshold (recovery for a specific generic ). A strong threshold guarantees uniform recovery for all -sparse vectors simultaneously and lies strictly below .

The Donoho-Tanner Phase Transition Curve

Donoho-Tanner Curve and Empirical Phase Diagram

Overlay the theoretical curve with simulated success probability for finite . Move the slider to see the transition sharpen as grows.

Parameters

Example: Sizing a Sparse Recovery System

You need to reconstruct a signal with coefficients of which are nonzero. How many Gaussian measurements does the Donoho-Tanner theory predict you need, assuming a weak-threshold operating point?

Estimate the required $\rho$

Pick a target and solve . For (i.e., ), the Donoho-Tanner table gives . Thus requires .

Try $\delta = 0.2$

At (), . So : comfortably below. But we used twice as many measurements. The operating region depends on how close to the cliff you want to be.

Interpretation

is the minimum in the noiseless, Gaussian, asymptotic sense. In practice one adds a safety margin (30-50%) for finite- effects and noise, yielding . For a designer this translates directly into the number of sensors, ADC channels, or RF chains required.

Sparse Recovery: Theoretical vs. Practical Thresholds

| Method | Weak threshold | Noise robust? | Complexity |

|---|---|---|---|

| / LASSO | Donoho-Tanner curve (tight) | Yes (LASSO) | |

| Orthogonal Matching Pursuit | Slightly below DT curve | Moderate | |

| Approximate Message Passing (AMP) | Matches DT curve asymptotically | Yes (state evolution) | per iter |

| CoSaMP / IHT | Below DT curve | Yes | per iter |

AMP Saturates the Curve

Approximate Message Passing (Chapter 20) achieves the Donoho-Tanner curve with per-iteration complexity — dramatically faster than interior-point solvers for LASSO. This is possible because AMP's state evolution recursion provably tracks the LASSO solution in the high-dimensional limit. The practical implication: Donoho-Tanner is not just a theoretical benchmark; it is operationally achievable on problems with or larger.

Common Mistake: Non-Gaussian Sensing Matrices

Mistake:

Applying the Donoho-Tanner curve to structured sensing matrices (partial Fourier, Bernoulli, binary) without adjustment.

Correction:

The curve was derived for i.i.d. Gaussian . Partial Fourier matrices (used in MRI) exhibit a different, slightly more conservative phase transition. Bernoulli matrices are very close to Gaussian but not identical. Sub-Gaussian concentration ensures the curve is a good approximation, but constants should be verified by simulation before committing to a design.

Phase transition (in sparse recovery)

A sharp threshold in the plane separating regimes of successful and failed recovery. The width of the transition band shrinks as , so in the high-dimensional limit it is a cliff.

Related: Donoho-Tanner curve, LASSO Estimator, Restricted isometry property

Quick Check

A sensing system uses random Gaussian measurements on an dimensional signal. The Donoho-Tanner curve gives . What is the maximum -sparse signal the system can reliably recover?

gives .

Safety Margins in Practice

In deployed systems, operate with at roughly 60-70% of to tolerate noise, model mismatch, and finite- effects. AMP-based recovery with online noise-variance estimation is the standard choice when .

Key Takeaway

The Donoho-Tanner phase transition is a sharp cliff in the plane: below the curve, -recovery succeeds with probability one; above, it fails with probability one. For Gaussian sensing matrices the curve is known explicitly. It is the gold standard benchmark for any practical sparse-recovery algorithm.